01:00 PM - 01:25 PM

Description

The NVIDIA CloudXR SDK empowers developers to deliver highly complex applications and 3D data to low-powered VR & AR devices. We discuss what NVIDIA CloudXR is, how to get started integrating the SDK into your applications, and the benefits customers have shared during their development. We learn from Bentley Systems as they discuss how NVIDIA CloudXR introduces new ways of work while preserving and elevating existing AEC/O workflows for design and building.

Speakers

01:30 PM - 01:55 PM

Description

Building digital twins of our environments is a key enabler for XR technology. In this session, we will cover a few works, which have recently been developed at InnoPeak Technology, on 3D reconstruction (building digital twins) from monocular images. We first present MonoIndoor, a self-supervised framework for training depth neural networks in indoor environments, and consolidate a set of good practices for self-supervised depth estimation. We then introduce GeoRefine, a self-supervised online depth refinement system for accurate dense mapping. Finally, we talk about PlaneMVS, a novel end-to-end method that reconstructs semantic 3D planes using multi-view stereo.

Speakers

02:00 PM - 02:25 PM

Description

How hologram telecommunication delivers the parts that every other technology leaves out — the parts that make us human.

Speakers

02:30 PM - 02:55 PM

Description

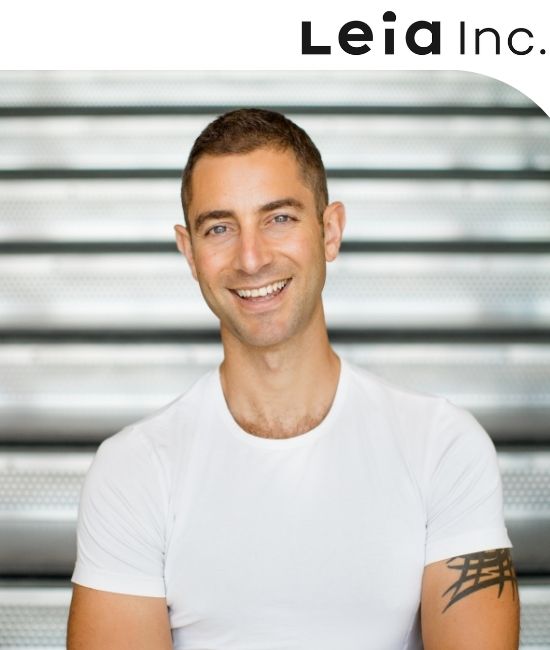

Headset-based AR/VR offers an immersive dive into these new digital worlds, but to many it still feels cumbersome and unfamiliar. As a result, mass adoption is still relatively slow.

3D Lightfield displays offer a naturally immersive, “window-like” 3D look into the Metaverse while leaving users’ faces unencumbered. They can be readily deployed on familiar terminals, from smartphones / tablets to laptops or automotive displays.

Better still, this method ensures compatibility with much of the existing digital content ecosystem, hence democratizing access to the Metaverse and potentially accelerating its deployment.

In this talk, I will review our efforts at Leia to commercialize Lightfield-based mobile devices and our take on how to steadily ramp consumer adoption of the Metaverse.

Speakers

03:00 PM - 03:25 PM

Description

LiFi is a technology that delivers highspeed data wirelessly to XR devices using light waves. Connect wirelessly to your devices where radio frequencies are not permitted due to security or safety reasons. Signify (formally Philips) is connecting XR devices with stable speeds of 250 Mbps, with ultra-low latency that is stable, safe and secure. This technology is being deployed in hospitals, military, oil and gas, mining and manufacturing. Find out how LiFi will enable wireless XR with even higher data throughput for superior performance.

Speakers

03:40 PM - 04:35 PM

Description

How does augmented reality overlap with the shift from web2 to web3?

Amy will moderate a panel of experts on the convergence of #AR with the growth in adoption of blockchain, NFT, and cryptocurrency.

Speakers

04:40 PM - 05:05 PM

Description

More than a decade ago, the industry started to signal interest in enabling core XR technologies. Around 6~8 years into that phase, the industry started seeing first generation XR devices introduced into the market with varying degrees of success. Just 2 years ago, we started to see an explosion in user interest in XR-related products, in part due to the pandemic influencing user behavior, but also thanks to companies trail-blazing in products and services. The question we continue to ask ourselves is: what are the devices and the user experience that should be offered to the end-users in the next 3~5 years? Goertek is proud of its legacy, and its continued investment into the XR category and we look forward to using this session to engage with the XR community.

Speakers

05:10 PM - 05:35 PM

Description

Due to the demanding optical architectures of diffractive waveguide gratings used for augmented reality applications, the gratings must be manufactured to extremely high accuracies, or the image quality will suffer. The grating period has to match the designs within tens of picometers, and the tolerances for the relative orientation of the gratings are in the arcsecond range. Both the production masters and the replicated gratings need to be characterized nondestructively, and the grating areas scanned to ensure uniformity. The measurement system should work for surface relief and volume holographic gratings in various material systems. We describe a Littrow diffractometer that can perform this challenging task. A narrow-band and highly stable laser source is used to illuminate a spot on the sample. Mechanical stages with high-accuracy encoders rotate and tilt the sample, until the laser beam is diffracted back to the the laser. This so-called Littrow condition is detected through a feedback loop with a beam splitter and a machine vision camera. The grating period and relative orientation can then be calculated from the stage orientation data. With the system properly constructed, and custom software algorithms performing an optimized measurement sequence, it is possible to reach repeatability in the picometer and arcsecond range for the grating period and relative orientation, respectively. By carefully calibrating the stages or by using golden samples, absolute accuracy for the grating period can also reach picometer range.

Speakers