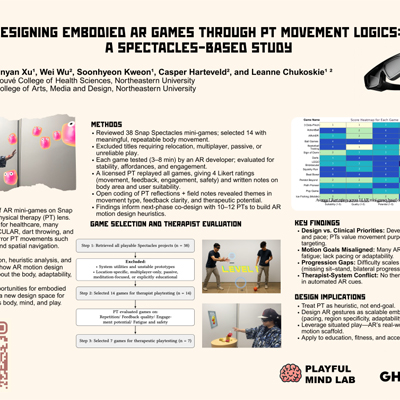

Designing Embodied AR Games Through Physical Therapy Movement - Lightning Talk

Presenter: Binyan Xu

Binyan Xu’s project examines off-the-shelf AR games on Snap Spectacles through the lens of physical therapy. Rather than treating rehabilitation as a narrow clinical application, the work uses PT reasoning to understand how motion is structured in wearable AR games. Many commercial AR mini-games already ask users to reach, throw, move through space, follow rhythm, and make spatial decisions. By analyzing these patterns with input from a licensed physical therapist, the project shows how AR gameplay can reveal deeper assumptions about the body, progression, adaptability, and embodied cognition.

The work is especially compelling because it reframes physical therapy not only as a medical domain, but as a design framework for motion-based interaction. It suggests that better AR games and applications can be built by thinking more carefully about how bodies move, learn, adapt, and engage with space.

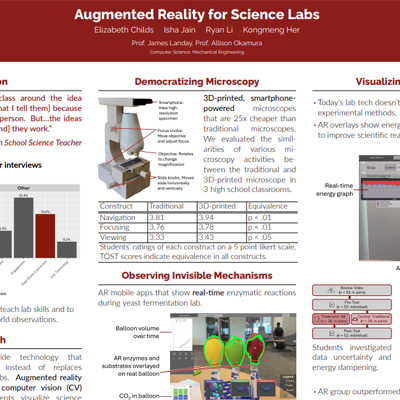

Augmented Reality for Hands-On Education - Lightning Talk

Presenter: Elizabeth Childs

Elizabeth Childs’ project explores how AR can support hands-on science education without replacing the physical lab. The work focuses on making invisible scientific processes visible while preserving the value of real-world experimentation. Rather than moving students entirely into a virtual environment, the project uses AR and computer vision to augment physical instruments and classroom labs.

The project includes low-cost, smartphone-powered microscopes, AR overlays for real-time enzymatic reactions, and visualizations that help students reason about energy transformation and uncertainty. It connects XR design to actual classroom needs, showing how AR can make science more accessible while preserving the tactile, experimental, and observational practices that make lab learning meaningful.

Real-Time Spatial Digital Twin of a Beef Cattle Production System - Lightning Talk

Presenter: Ruhee Nagulwar

Ruhee Nagulwar’s project brings XR into an unexpected but important setting: livestock production. The project presents a real-time spatial digital twin of a beef cattle production system built in Unreal Engine 5. It connects sensor data to a 3D agent-based simulation, allowing animal health, behavior, and environmental conditions to be visualized spatially.

The project shows how XR can make complex, high-volume data easier to interpret. Instead of treating animal health metrics as disconnected data streams, the system situates them inside a live spatial model with dashboards, minimaps, health states, and movement patterns. It points toward a future where digital twins become operational tools for monitoring, decision-making, and intervention, not just visual models.

Rekindle: Fostering Emotional Perspective-Taking Through Face-Tracking-Based Affective Interactions in VR Interactive Narratives

Presenter: Hector Fan

This project uses face tracking in VR to connect a player’s emotional expressions to narrative progression. In Rekindle, players express emotions that align with a character’s memories, turning facial emotion recognition into a mechanic for emotional perspective-taking and deeper narrative engagement.

AlphaRise: A Compassionate Neurofeedback Game for MS Fatigue Management

Presenter: Ned Shoaei

AlphaRise is a closed-loop EEG neurofeedback game for people with multiple sclerosis-related fatigue. The system adapts difficulty in real time based on brain states and introduces a “Compassionate Mode” that supports users when fatigue appears, rather than punishing them for struggling.

From Clinical Practice Needs to XR Design: Developing Immersive Virtual Reality for Nursing Education

Presenter: Cynthia Bradley

This project presents a scaffolded immersive VR learning series for nursing education across six programs. The work uses multi-patient scenarios to support clinical reasoning, prioritization, performance assessment, and repeatable practice in complex care situations.

Supporting 3D Design Conflict Resolution in Collaborative Mixed Reality

Presenter: Niloofar Sayadi

This mixed reality system helps remote collaborators customize digital twins of physical objects without altering the original object. Users create parallel design branches, merge edits, and resolve conflicts through spatial previews directly on the object surface.

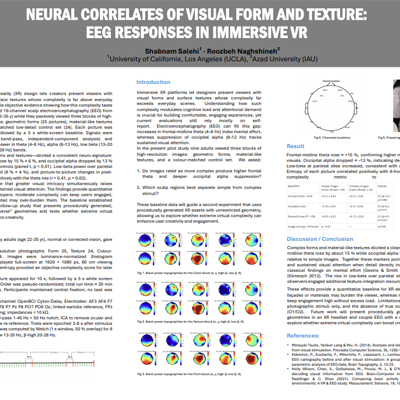

Neural Correlates of Visual Form and Texture: EEG Responses in Immersive VR

Presenter: Shabnam Salehi

This project studies how visual complexity in immersive environments affects cognitive load and attention. Using EEG, the work links complex forms and textures to neural markers of mental effort and visual engagement.

Civic Becoming in the Age of Simulation

Presenter: Tracie Yorke

This project examines how XR and AI environments shape civic identity and meaning-making. Using simulated environments and AI-driven avatars, the work explores how people understand civic life when participation is mediated by spatial design and generative AI.

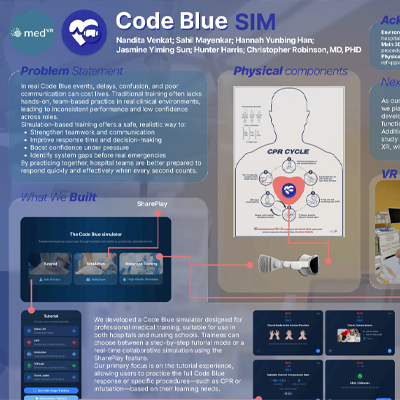

Code Blue Simulation

Presenter: Yunbing Han

Code Blue Simulation is an XR training system for emergency medical response. The project helps hospital teams practice high-pressure procedures, improve communication, and build confidence through realistic, hands-on simulation.

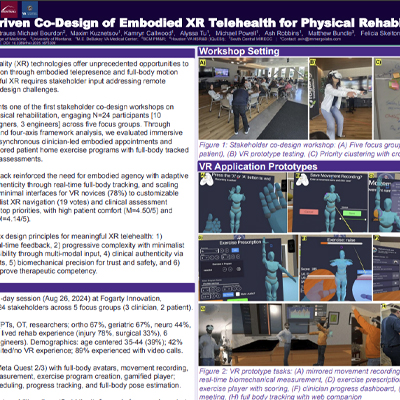

Stakeholder-Driven Co-Design of Embodied XR Telehealth for Physical Rehabilitation

Presenter: Aviv Elor

This project explores embodied XR telehealth for physical rehabilitation through stakeholder co-design with clinicians, patients, designers, and engineers. The system includes full-body avatars, biomechanical feedback, clinician-authored exercise programs, and design principles for remote rehabilitation.

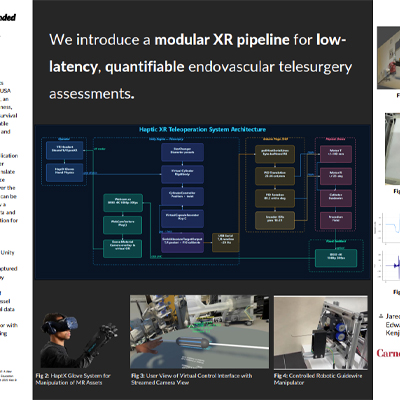

A Haptically-Enabled Extended Reality Platform for Neurovascular Telesurgery

Presenter: Jared Scott

This project introduces an XR platform for neurovascular telesurgery assessment. It combines a Unity-based virtual operating environment, haptic feedback, and a robotic catheter guidewire system to support endovascular procedure training and evaluation.

Eye Tracking on XR and AI Smart Glasses

Presenter: Majd Khalaf

This project presents CapGaze, a capacitive sensing-based eye tracker for smart glasses. The system estimates gaze using electrodes around the eye while avoiding some of the power, cost, lighting, and privacy limitations of camera-based systems.

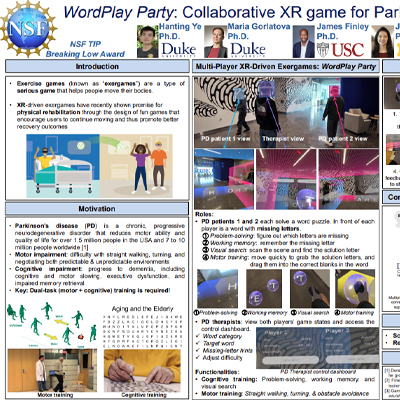

WordPlay Party: Collaborative XR Game for Parkinson’s Rehabilitation

Presenter: Hanting Ye

WordPlay Party is a multiplayer XR exergame for Parkinson’s rehabilitation. The game combines cognitive training, such as word solving and working memory, with motor training, including walking, turning, reaching, and obstacle avoidance.

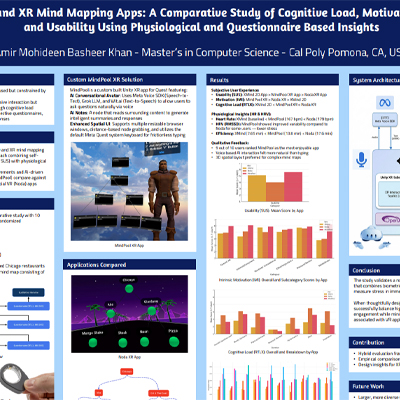

2D and XR Mind Mapping Apps: A Comparative Study of Cognitive Load, Motivation, and Usability Using Physiological and Questionnaire-Based Insights

Presenter: Amir Mohideen Basheer Khan

This project compares traditional 2D mind mapping with commercial and custom XR mind mapping tools. By combining questionnaires with physiological measures, the study examines how XR and AI-enhanced spatial tools affect usability, motivation, and cognitive load.

Final Thoughts

Taken together, the accepted posters show how broad XR research has become. Some projects focus on clinical care and training, while others explore education, sensing, collaboration, storytelling, agriculture, civic identity, and creativity. What connects them is a shared interest in building systems that can be experienced directly.

That is what makes this year’s AWE poster track exciting. The work has already been selected, and at AWE 2026 it will be demonstrated, discussed, and experienced on the expo floor. Attendees will be able to talk with researchers, try prototypes, ask questions, and imagine where these systems might go next. For a field built around presence, interaction, and immersion, that kind of exchange feels especially important.